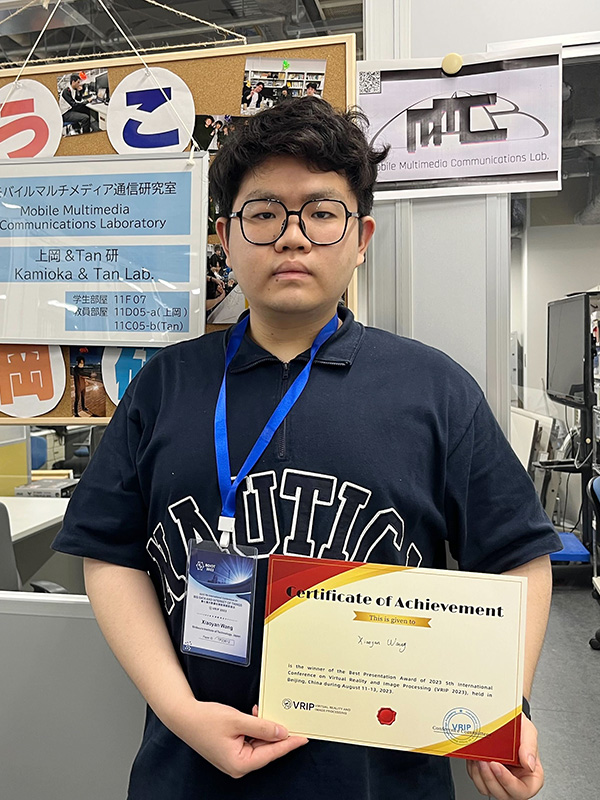

Wang Xiaoyan have received “best presentation award” for the 5th International Conference on Virtual Reality and Image Processing (VRIP 2023)

- Graduate School of Engineering and Science

- Global Course of Engineering and Science

Wang Xiaoyan have received “best presentation award” for the 5th International Conference on Virtual Reality and Image Processing (VRIP 2023) held in Beijing, China during August 11-13, 2023.

【Awardee】

Wang Xiaoyan (Global Course of Engineering and Science / Grade: M2)

【Faculty Supervisor】

Prof. Eiji Kamioka

Associate Prof. Phan Xuan Tan

【Conference name】

5th International Conference on Virtual Reality and Image Processing (VRIP 2023)

【Award】

Best Presentation Award

【The title of the paper】

“Central Vision based Super-resolution for 360-Degree Videos”

With the rapid development of VR technology, 360-degree video is gradually coming into public view. Compared with normal video, it can bring a better immersive experience to the user with the trade-off of a huge bandwidth and transmission latency. Many studies choose to transmit low-resolution video frames to solve those problems and use super-resolution on the client side to reconstruct the resolution of the whole frame. However, in those methods, computational latency caused by super-resolution process of either a whole video frame or viewport portion of a frame, is critical problem. In this paper, the idea of central vision based super resolution is considered in 360-degree video streaming. Particularly, we adopt FOCAS - a super resolution method using foveated rendering for normal 2D video, to 360-degree video. We conduct an experiment in order to verify the effectiveness of human central vision. The experimental results show that it is able to reduce the computational latency by 82% while maintaining a competitive visual quality compared to existing super-resolution methods.